All topics

Topic Hub

GPU & LLM Autoscaling

GPU, AI, and LLM autoscaling guidance for inference workloads on Kubernetes.

Prefer feeds? Subscribe via RSS.

Kedify Extends KEDA with Predictive, Vertical, and Multi-Cluster Autoscaling for Modern Workloads

March 23, 2026

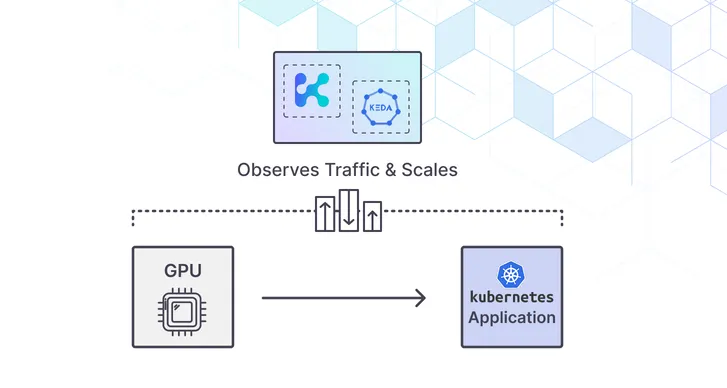

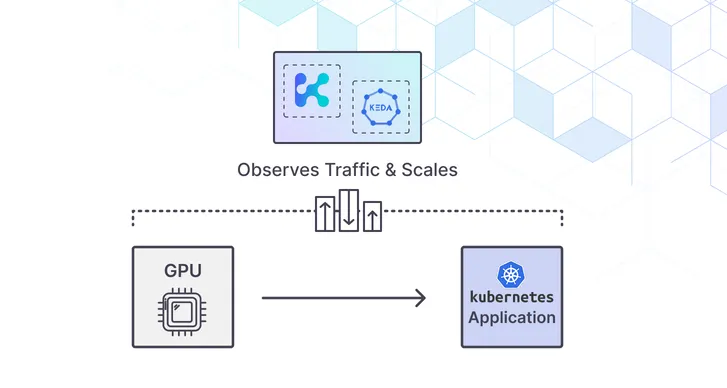

How to Autoscale GPU and LLM Workloads on Kubernetes

November 05, 2025

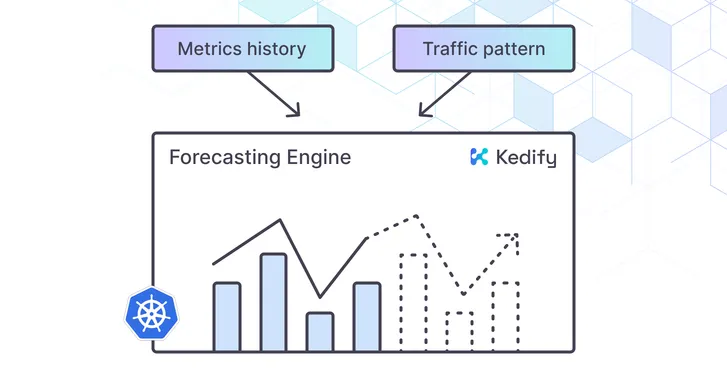

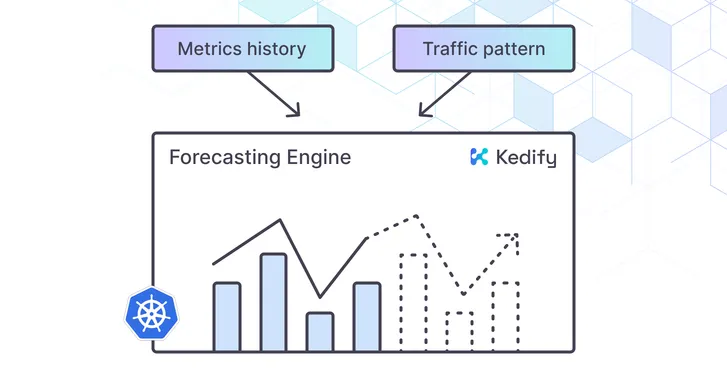

Predictive Autoscaling for Kubernetes: Scale Before Traffic Spikes

October 23, 2025

From Intent to Impact: Introducing The 2025 Kubernetes Autoscaling Playbook

October 22, 2025

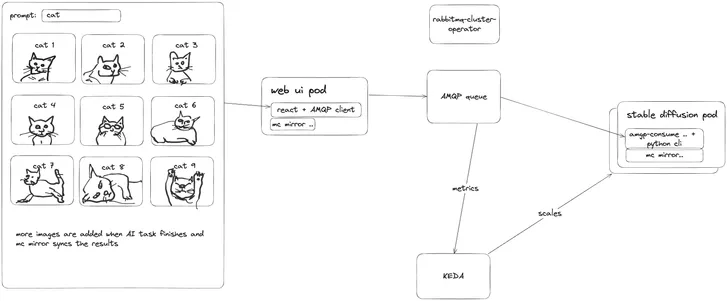

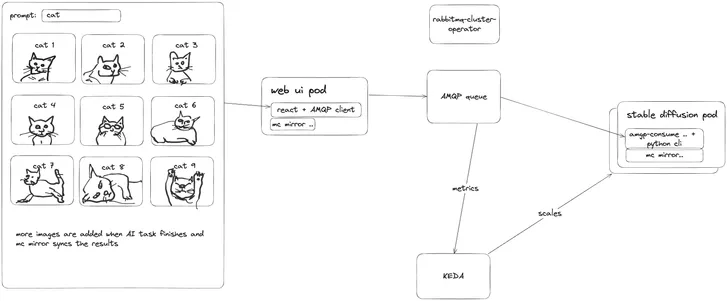

Autoscaling AI Inference Workloads to Reduce Cost and Complexity

June 14, 2024